As an instructional designer, clients often come to me with a list of topics that they want to train via elearning, or a current training that they want to convert to elearning. For example, a client once told me that they wanted a 2 hour in-person project management training. And the first thing I asked is “What should be the outcome of the training?” Inevitably, we ended up at a place where the awful truth was acknowledged, she didn’t know what the outcome of the training should be. Of course, without knowing what the outcome should be (other than x number of people were trained) there’s no way to measure the impact of the training, or determine whether or not the training was any good.

It can be a difficult conversation, particularly if the idea of training is mandated by a superior, and the client you’re speaking with is really just the messenger. It’s equally difficult when dealing with a subject matter expert who has invested their heart and soul into developing a training, but has a blind spot when it comes to correlating training to performance. If you’ve ever been in this situation, either as an instructional designer, subject matter expert, or client, I have a few tips that can help you see the light.

#1 Know the difference between assessment and analysis

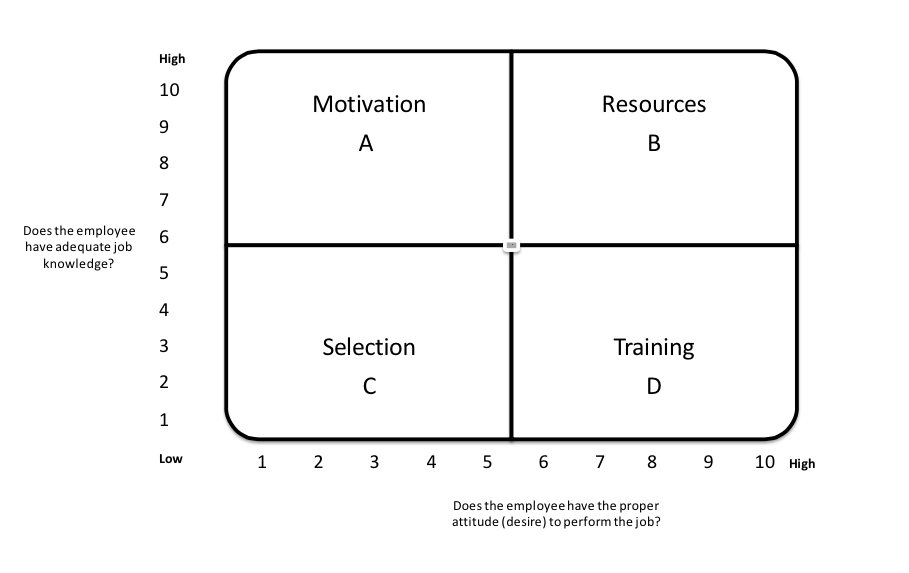

Needs assessment and needs analysis are often conflated and in some cases the latter is ignored entirely. It’s important to recognize that needs assessment identifies gaps (the what), while needs analysis identifies the cause of the gaps (the why). For example, knowing that project management is an issue in your organization is not the same as knowing why people fail to manage projects successfully. Maybe this is happening because you haven’t given employees the right motivation, or the right tools, or perhaps you haven’t hired the right people or haven’t placed them in the appropriate roles. There’s a great tool called the Performance Analysis Quadrant that can help you ask the right questions and start to understand the cause of the performance gaps. There’s also a performance analysis process designed by professors Robert Mager and Peter Pipe (often called performance analysis flowchart or flow diagram) that can help you ask the right questions to understand the cause of gaps, and start thinking about ways to solve them.

#2 Begin with the end in mind

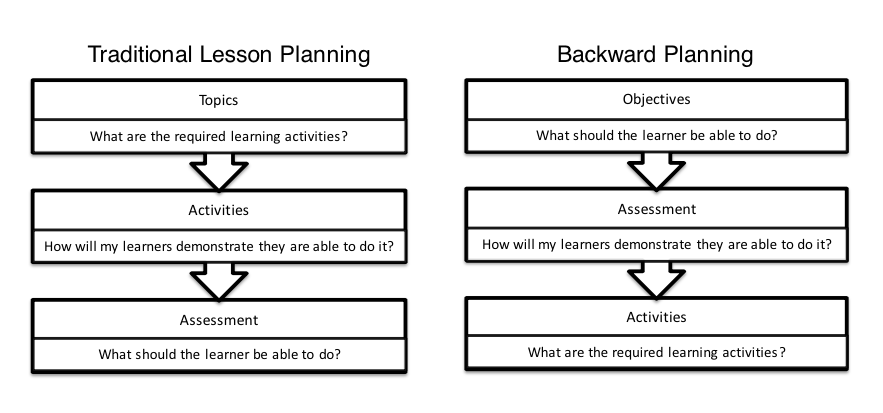

Once you know the root cause of a performance gap and determined that training is an appropriate solution, then you can begin another type of analysis, called instructional analysis. Instructional analysis is all about removing unnecessary training content and activities and focusing on what’s necessary to reach the instructional goal. But before you get there, of course, you need to clarify the objectives. This is a shift in thinking for most people, no less trainers and teachers. Lesson planning, for example, typically begins with the content to be taught. From there, a teacher will determine how to teach the lesson using the content they have been given, and then, lastly, determine how to assess the material has been learned. The truth: this model is ridiculous. It’s the reason why millions of dollars are invested in training programs that are evaluated on the basis of smile sheets and attendance, and why students fail tests even though they are able to perform. A better way is to begin with objectives and let that drive the rest of the process.

#3 Use a blended learning approach

It might seem odd coming from someone who designs elearning courses, but elearning is not always the solution. In fact, it is not uncommon for performance problems to require a combination of solutions, such as mlearning, on the job training, mentoring and job aids. But a blended learning approach to training (other than say distance education in higher ed) shouldn’t be about providing a mix of options on general principle; it should be about conducting analysis to determine which solutions are needed to solve the performance gaps and implementing only those solutions that will lead to the desired outcome.

Let’s go back to the example of a project management training. In our example, the client wanted to create a two hour workshop on project management. Let’s say she decided to add a self-paced elearning version of the same workshop for the people who cannot attend the workshop in person, and an hour-long webinar version. On the surface, this might sound like a reasonable approach. But again, without conducting a performance analysis and taking into consideration the full context and possible influences on performance, this entire approach could be a waste of time. What if employees simply need better project management tools? What if there’s one bad employee who is at the root of the project management issues? What if the culture isn’t truly collaborative and that’s the problem?

#4 ADDIE or ADDIE

ADDIE is an instructional design methodology in which each letter represents a phase in the process. The phases are analysis, design, development, implementation and evaluation. In my original example I presented a case where my client asked me to skip the first two phases and jump right into development. If you skip analysis and design, you risk setting your program up for failure. If you don’t establish measures of success before you develop the program you will struggle to demonstrate the value of your program after it has been implemented. Businesses and organizations are noticing this, finally, and the emphasis on analytics has been steadily on the rise. Performance analysis is the key to identifying why a performance gap exists, and how to solve it. Skipping performance analysis is like throwing spaghetti against a wall to see what sticks.